The future of Stereo – How to be immersive

The future of Stereo

The future of Stereo

the future of stereo : If we have not an ear in the middle of the front, but two on the side of the head, it’s only for our brain to know from where is coming the danger.

We do not have to deal with predators today, but our ears are still really useful to walk around in our urban jungles, and more generally, to localize a sound from any direction.

Unfortunately, we’re not like some other mammals, like horses who can move their ears in any direction, and if our ear has so strange shapes, it’s only for that reason, sound is bumping onto the side, to be routed to the middle ear. Placed on both sides of the head, our ears are not receiving the audio informations at the same time, nor with the same specifications (timbre, eq, delay,..), our brain is then able to analyze what is coming from where.

Ti ll today, recording our surrounding sonic world has adapted to imitate our ear. It remains the same phenomenon: something moves, moves the air around, which is creating a moving perturbation, and modulating the pressure of air, this wave hits a sensible surface which transforms it into a signal.

ll today, recording our surrounding sonic world has adapted to imitate our ear. It remains the same phenomenon: something moves, moves the air around, which is creating a moving perturbation, and modulating the pressure of air, this wave hits a sensible surface which transforms it into a signal.

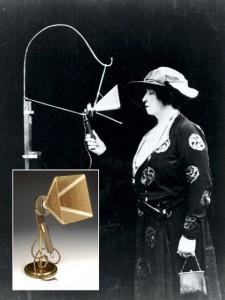

Monophonic pressure transducer, aka mono capsule microphone, transforming pressure variation into electrical modulation was the start. The technique remains the same till today, even if progress has allowed us to improve sound quality and reach far over from our ear’s frequency range.

Multiplicating the pickup points was the second step.

In 1930, engineer George Clément Levy said: “Stereo will be for the ear, what eyes are waiting for 3D cinema”

In 1931, Alan Blumlein designed the first stereo recording transducer patented, combining two signals issued from two microphones with an 8 directivity and placed with an angle of 90°.

And stereo was mostly for music records, and later, for radio broadcasting.

Finally, we saw the arrival of stereo shows on TV in the 90’s, with the NICAM format.

Take a look to this History of Stereo Sound.

From Stereo to immersive sound

If we have to talk about stereophonic recording microphones, we have to deal with an array which reproduces our hearing, and initialy what comes front the front array. Sounds from the rear, would be reproduced by reflexions of the frontal waves on objects placed behind us and coming back to the front, like early reflections, or more extensively, reverberation.

Let’s have a look to some of the main stereo microphonic systems:

Blumlein Stereo

The Blumlein stereo set-up is a coincidence stereo technique, which uses two bidirectional microphones in the same point and angled at 90° to each other. This stereo technique will normally give the best results when used at shorter distances to the sound source, as bi-directional microphones are using the pressure gradient transducer technology and therefore is under influence of the proximity effect. At larger distances these microphones therefore will loose the low frequencies. The Blumlein stereo is purely producing intensity related stereo information.

Disadvantage, that sound sources located behind the stereo pair also will be picked up and even be reproduced with inverted phase.

XY Stereo

XY stereo set-up is a coincidence stereo technique using two cardioid microphones in the same point and angled typically 90° to produce a stereo image. Opening angles of 120° to 135° or even up to 180° between the capsules are also seen, which will change the recording angle and stereo spread.The channel separation is limited, and wide stereo images are not possible with this recording method.

MS Stereo

AB Stereo

ORTF Stereo

The ORTF stereo technique uses two first order cardioid microphones with a spacing of 17 cm between the microphone diaphragms, and with an 110° angle between the capsules. This technique is well suited for reproducing stereo cues that are similar to those that are used by the human ear to perceive directional information in the horizontal plane. The spacing of the microphones emulates the distance between the human ears, and the angle between the two directional microphones emulates the shadow effect of the human head.

Binaural Stereo

Invented in the late 20’s, the Binaural recording technique uses two omnidirectional microphones placed in the ears of a dummy head with or without torso. This two-channel system emulates the human perception of sound, and will provide the recording with important aural information about the distance and the direction of the sound-sources (it uses the HRTF (Head Related Transfer Function), mathematical formula, which translates head’s interferences with propagated signal’s path). When replayed on headphones, the listener will experience a spherical sound image, where all the soundsources are reproduced with correct spherical direction.

Each system will have its specificity ant its capabilities to reproduce the sound space, take a look to thispage, where you’ll find really interesting audio comparisons between those different systems, “listening” to the same source (audio examples at the bottom)

Audio comparison between different systems

The binaural system differs from the others, and has seen some enhancements and new ideas like the 3dio system.

Hear this demonstration published by The Verge, and take a tour to a NYC walk around.

As we’ll see below, we’re now at a turning point in the ways to immerse the listener into enveloping sonic world.

3D SOUND ?

We could talk about new and old multichannel recording/playback systems, but we know that most of the listeners are more often in a stereo configuration in their everyday life, and the purpose of this article is based on stereophony.

If we look towards the recent events, something is going on in the stereo world, and news are, as usual, coming from the image world.

As videogames does in first person’s games, videomakers were wondering how we could propose images were we could “turn our head”, so they invented 360° videos (sites like Reddit or Vrideo are good examples), but what about sound?

Tv is particularly interested, and the deutch TV channel ZDF is actually working on a documentary in 4K video and 3D sound (see here).

A nice BBC online project has been initated too, called Unhearthed, which uses deeper the binaural technology and it’s extension with 3D sound.

You’re then immersed into the Equatorial forrest, following the day in a life of a hummingbird.

Occulus Rift, a pioneer in 3D visualisation, is now also providing a 3D Audio system.

Simultaneously, the invented a capturing system, an ‘Audio Panoramic Camera’, an 8-inch sphere with 64 mics and 5 cameras embedded for capturing panoramic video with expansive sound to match

the Crescent Bay prototype

We can now speak about Audio VR, which, in combination with binaural recordings will lead to a future, full of immersive ideas, bringing us closer to reality.

How to build immersive (and binaural) sounds from scratch?

We all have great libraries or personnal recordings we wish to be more immersive than they are.

It exist a bunch of useful tools which allows you to do it.

From mono elements to binaural

Take a look to Noise Makers Binauralizer®, this plug in uses HRTF functions (Head Related Transfer Function) to process and spacialise your sources into binaural.

Wave Arts did some time ago a plugin called Panorama®

Panorama combines HRTF-based audio panning with acoustic environment modeling, including wall reflections, reverberation, distance modeling, and the Doppler pitch effect.

It reproduces psychoacoustic sound localization and distance cues, allowing you to pan sounds in three dimensions: not just left and right, but up, down, front, back, near, and far.

Their demo wasn’t really convincing, but, it remains one of the first tool to use this technique

For those of you who are interested in VRaudio, we recommend to take a look to the twobigears.com site.

This (french) guys are proposing a way to create a real workflow, with plugins, to create VR sounds, eventually linked to Oculus Rift-like monitoring systems, and even integrating head tracking!

wherever you look, sound will follow you!

Take a look!

Finally, and because it’s a work in progress activity, Oculus propose Oculus Audio SDK, a UI plugin conceived for developers.

Once placed, the plugin UI provides access to several parameters, including gain, near/far distances, 3D position, reverb, and reflection. The 2D grid (with views for both X/Z and X/Y planes) provides a visualization of the current 3D position and near/far settings, and allows for zooming in and out with Scale(m) knob.

You can download it here.

From BFormat to binaural

We didn’t talk about BFormat from Soundfield, but it deserve a whole article which will come soon!

Waiting for it, take a look to these wonderful plugins allowing to decode BFormat into individual axis-sounds, mixers for BFormat, or transformers for mono or multiple channels sounds into Bformats

Daniel Courville did a great work as a plug-in suite in VST and Audio Unit format for OS X, giving artists and producers the tools necessary to work with Ambisonic surround sound technology, but integrated with mainstream Digital Audio Workstation (DAW) software like Steinberg Nuendo or Cubase.

HARPEX® is a fantastic decoder, transforming BFormat in a lot of other formats, including Binaural, 5.1, 7.1, etc…

And, of course, plugins from TSL Products, like Soundfield SurroundZone2, as a swiss knife tool for decoding BFormat.

To finish, you can grab some Preconfigured sessions with plugins inserts and routings for various DAWs (Pro Tools, Pyramix, Nuendo, Reaper, etc.), and various plugins (Surround Zone, B2X, Harpex-B, Double M/S Tool, etc.). at our excellent colleagues from SurLib, specializes in surround ambiences.

Stay tuned!

In a future article, we’re talk about the future of sound effects in these formats.

redlibraries is already involved in their researches to provide collections according to those future developments.

Related links;

UnEarthed – Research & Development : all about the project.

MPEG_H “cinematic” trailer: future developments of this format (not only for 3D audio – really interesting!)

“The Box” – a video trailer using binaural and multi channel sources.

Video reports about the MPEG_H Alliance

Oculus Rift – Crescent Bay: some infos about the prototype.

Binaural in practice: really interesting article about binaural tips and tricks 😉